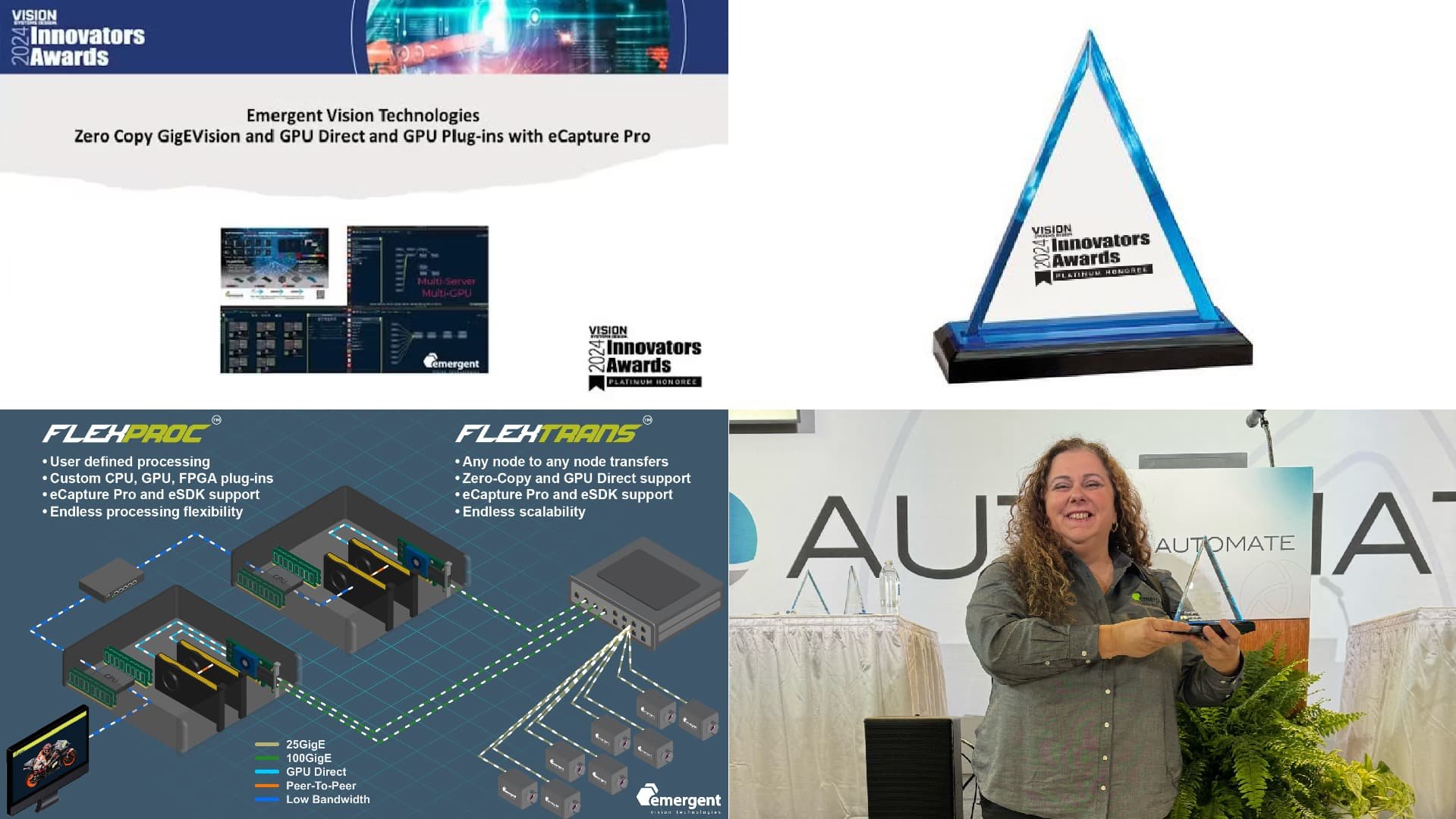

Plug-Ins Emerġenti bil-GPU Direct u eCapture Pro Jirbħu l-Premju tal-Innovaturi tad-Deheb tal-2024 minn Vision Systems Design China

Emergent Vision Technologies, pijunier f'dan ir-rigward Kameras GigE Vision b'veloċità għolja and zero-data-loss vision technologies, was named a gold-level honoree in Vision Systems Design China’s 2024 Innovators Awards for its Zero Copy GigEVision and GPU Direct Plug-ins with eCapture Pro. Judged by a panel of impartial machine vision experts, the annual program celebrates the disparate and innovative technologies, products, and systems found in viżjoni bil-magna u l-immaġini.

Zero Copy GigEVision and GPU Direct Plug-ins with eCapture Pro

Emergent Vision Technologies, pijunier f'dan ir-rigward Kameras GigE Vision b'veloċità għolja u, zero-copy and zero-loss vision technologies, introduces new plug-ins that leverage GPUDirect technology, which supports Emergent’s renowned zero-copy, zero-loss imaging approach. The new built-in plug-ins include polarization, H.265/RTMP (real-time messaging protocol), pattern matching, and inference.

Supported by eCapture Pro software, GPUDirect technology enables the transfer of images from the camera directly to the GPU, which bypasses system memory and the CPU, delivering zero-copy and zero-loss imaging capabilities. In addition, Emergent offers optimal flexibility in camera stream routing to any GPU in a system, which simplifies processing distribution. Supported GPUs include NVIDIA RTX A6000, RTX A5000, RTX A4000, RTX 6000 ADA, Jetson Orin, and Jetson Xavier.

“With the tremendous amounts of data that Emergent’s Kameras GigE Vision capture, users need a means for processing that data with top performance,” said John Ilett, president and CTO at Emergent Vision Technologies. “Our GPU plug-in technology is supported within multi-camera and multi-server systems for maximum performance and ease of scalability.”

Custom Plug-Ins

End users can also create custom plug-ins in eCapture Pro that leverage Emergent’s zero copy, zero loss, GPUDirect approach while only writing plug-in code. In the link below, the demonstration shows the ease with which a plug-in can be deployed. First, CUDA-based code is written so the plug-in can run on an NVIDIA GPU. The code is then compiled to create the plug-in DLL and loaded into the software using a simple drag and drop interface. Lastly, the plug-in is instantiated on the selected GPU, allowing the end user to run the plug-in within eCapture Pro software.

Polarization Plug-In

In eCapture Pro software, end users can leverage the new built-in plug-ins, such as the polarization tool. This tool lets users review the benefits of the characteristic outputs of a standard polarized processing pipeline, such as degree of polarization or direction or angle of polarization. End users can even remove the polarized light or output one of the four orientation options: 0, 45, 90, or 135 degrees.

Deep Learning Inference Plug-in

With the inference plug-in, users can easily add and test their own trained deep learning inference model to perform detection and classification of objects. After training a model in a framework such as PyTorch or TensorFlow, users can add the model to the plug-in, instantiate it on the desired GPU, connect it to the camera, and click “run” to begin, allowing the software to detect and classify objects even while in motion.

Plug-In tat-Tqabbil tal-Mudelli

To use the pattern matching plug-in within eCapture Pro, the user first creates a pattern template, loads the plug-in, and then instantiates it on the desired GPU. Next the user specifies the path to the pattern template, connects the camera to the GPU, and runs the plug-in to begin matching patterns, including those on moving objects.

H.265/RTMP Plug-in

Emergent’s eCapture Pro software also offers a plug-in for the H.265 video codec, which is the successor to H.264, delivering up to 50% better video compression while maintaining the same level of video quality, making it an ideal option for high-speed, high-resolution video. In addition, the plug-in supports RTMP streaming, which enables users to stream high-speed video to YouTube and other live streaming platforms. In high camera count systems, this plug-in can help users handle vast amounts of data. In a 48-camera system leveraging a midrange server, a 48-port switch, and two GPUs, for example, all images can be compressed and stored on a single local M.2 drive while one camera from the system streams to an RTMP client such as YouTube.

Storja ta' Kameras u Softwer tal-Viżjoni bil-Magna Rebbieħa ta' Premji

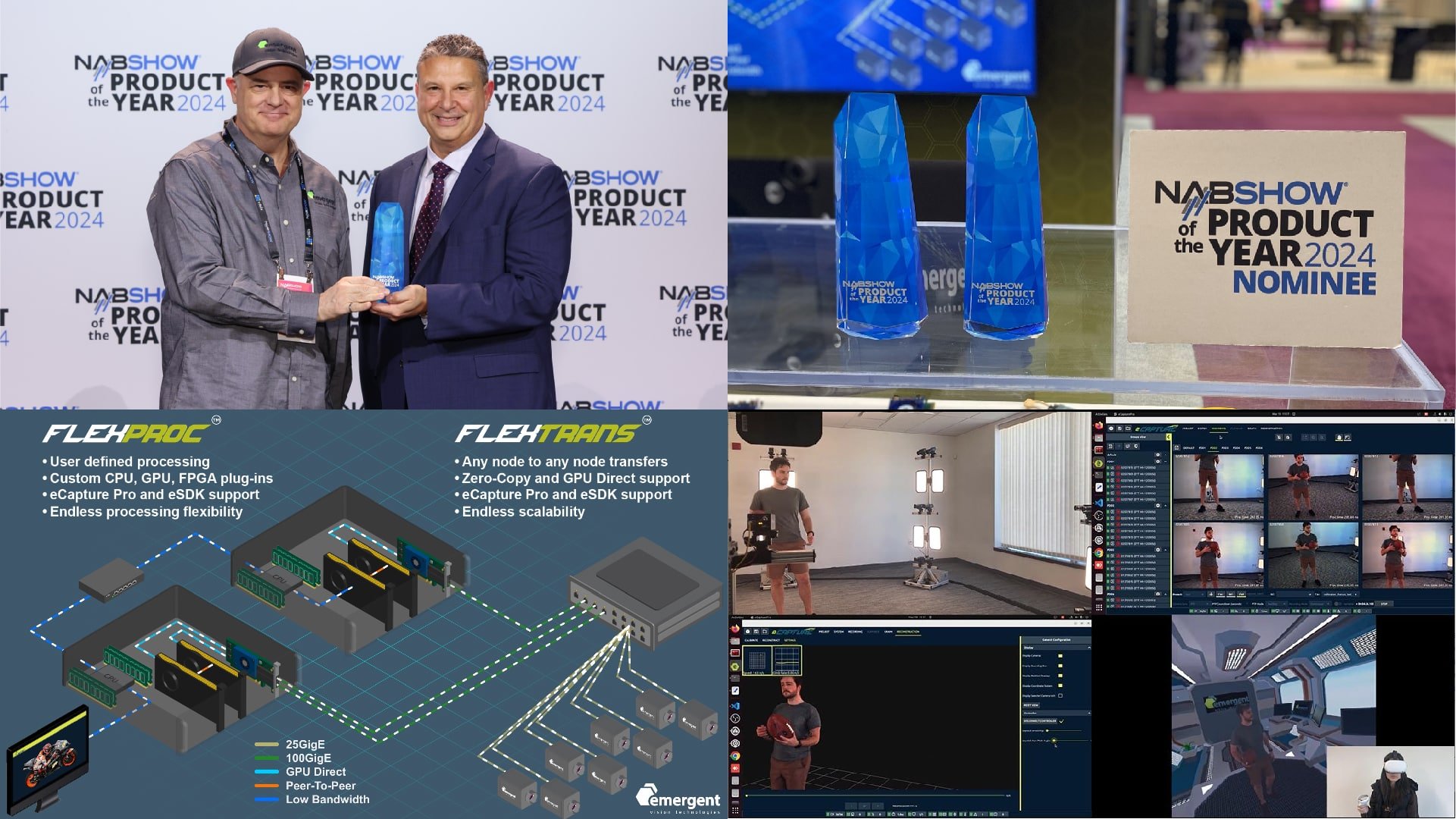

Emergent Vision Technologies irċeviet diversi premjijiet oħra għall-ħidma tagħha immaġini b'veloċità għolja teknoloġiji, inklużi iżda mhux limitati għal żewġ premjijiet tal-Prodott tas-Sena fi NAB Uri 2024 u Platinum Innovator Award at Automate 2024L-eCapture Pro ta' Emergent: Teknoloġiji FlexProc u FlexTrans u, Vidjo Volumetriku f'Ħin Reali ingħataw fil-kategorija tal-AI u l-Viżjoni bil-Magni għall-avvanzi teknoloġiċi avvanzati tagħhom.

Fl-aħħar snin, Emergent Vision Technologies irċeviet diversi oħrajn premjijiet u unuri, inklużi tliet Premjijiet tal-Innovaturi tal-2023 minn Awtomatizza, żewġ Premjijiet tal-Prodott tas-Sena tal-NAB tal-2022, li Premju tal-Innovaturi tad-Deheb 2022 minn Vision Systems Design China, u ħafna aktar.

Biex titgħallem aktar dwar il-kameras jew is-softwer tal-viżjoni bil-magna rebbieħa tal-premjijiet tagħna, Jekk jogħġbok ikkuntattjana llum.

Prestazzjoni u affidabbiltà ppruvati!

10+ snin ta' tbaħħir 10GigE

6+ snin ta' tbaħħir 25GigE

3+ snin ta' tbaħħir 100GigE

Evita l-imitaturi.

Involvi lill-innovaturi.