Tech Portal

Tech Portal

UDP, TCP and RDMA for GigE Vision Cameras

GigE Vision + GVSP

- 15+ Years widespread use

- Fully ratified and mature standard

- Massive adoption

- UDP based protocol

- True streaming protocol

- Multicast support

- Has everything you need

- Needs properly designed receiver at high-speed

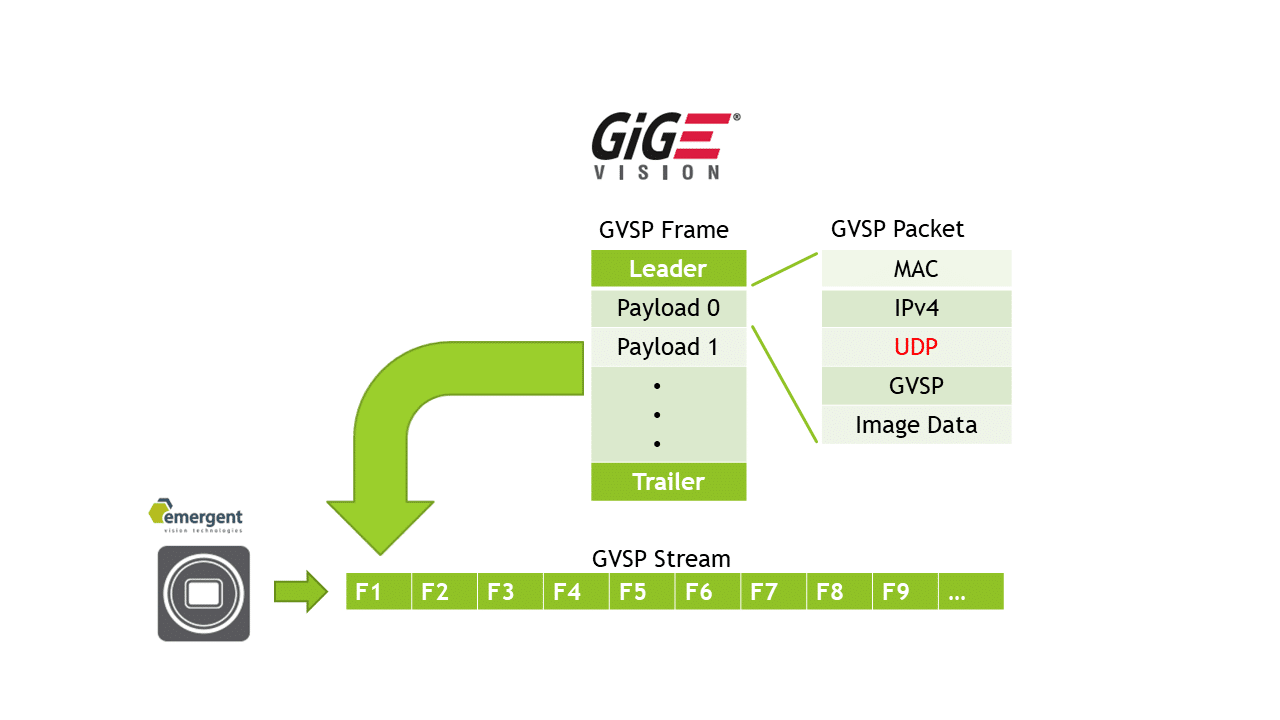

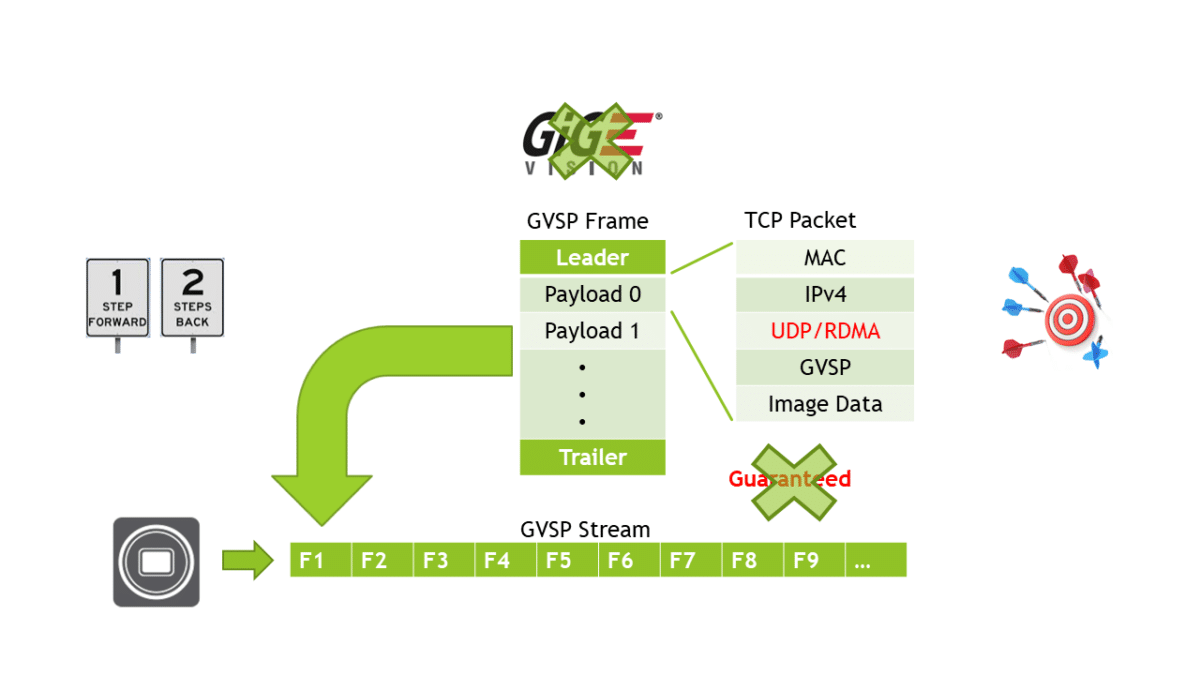

We will take a moment to understand the technology behind GigE Vision. GVSP is the Ethernet streaming protocol used in the current standard. A stream is made up of multiple frames (or images). Each frame is made up of a leader packet, multiple image (or payload) packets, and a trailer packet. All packets follow the UDP ethernet protocol which is an unconnected protocol. This simply means the camera sends the packets and leaves the receiver to its job of placing the data in the destination buffer. Being an unconnected protocol, this means it has 0 network overhead which leads to maximum network performance. It also means fundamentals like multicasting are supported. We must properly design our receiver to avoid data loss. CXP also follows this same protocol and leaves the receiver to its job of placing the data in the destination buffer. This leads to top performance and the lowest latency and jitter with a quality receiver. We will note that the inability of some companies to design a quality receiver has led them down alternate paths.

Figure: GVSP frame and packet structure.

Conventional GigEVision + GVSP

- Memory copy required(header splitting in software)

- Higher CPU %

- 3x System Memory bandwidth

- 3x More Powerful PC

- 3x PC Quantity

- 1/3 System Density

- Needs well designed receiver at high-speed

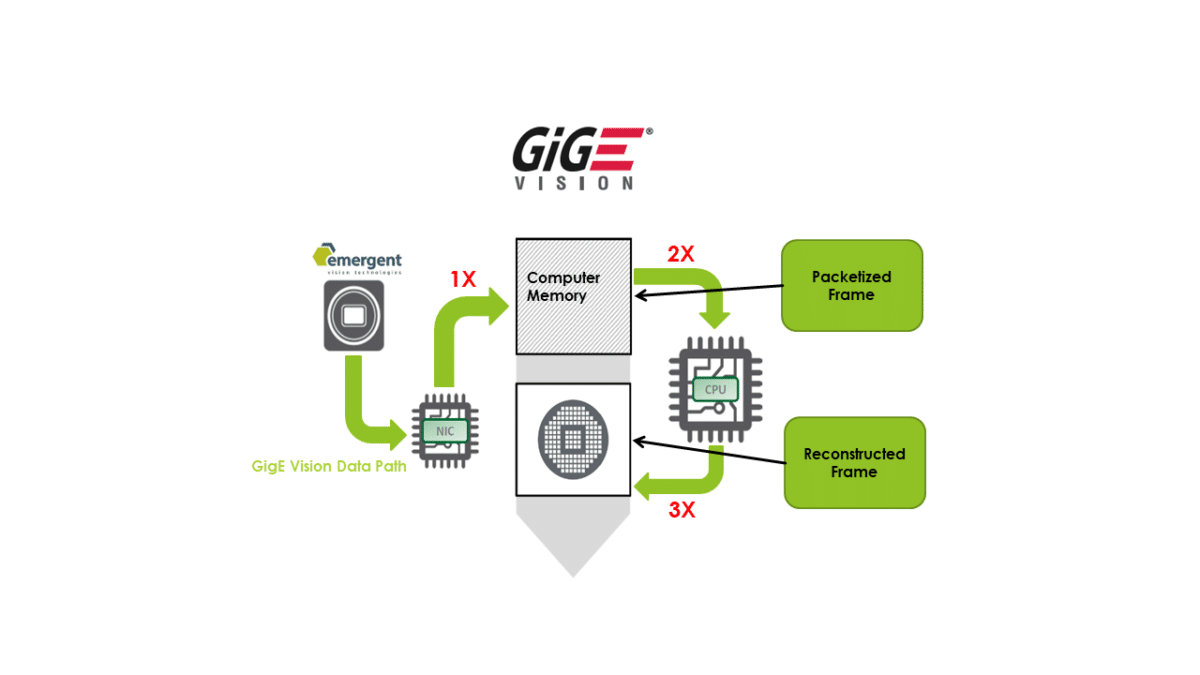

Conventional GVSP uses header splitting in software to strip the headers off the GVSP packets and place the image data from the payload packets into a contiguous memory buffer. This process raises CPU usage but more importantly eats up 3x system memory bandwidth usage over a 0 copy implementation. This results in a 33% efficiency for the system which factors into system cost in a number of ways. This is an example of a poorly designed receiver and many in the market are still doing this even at 10GigE but we still see cases where some companies have trouble running multiple 1GigE cameras in a single server all related to poor receiver design.

Figure: Data path in a conventional GigEVision + GVSP implementation.

Optimized GigEVision + GVSP

- True zero copy

- Uses header splitting(HS) in OTS NICs

- Full kernel bypass

- HS in use for SMPTE 2110 in M&E market

- Supported by industry processing cards

- Lowest latency and jitter

- No resends or flow control required (nor needed) with quality implementation

- Remains GigEVision compliant

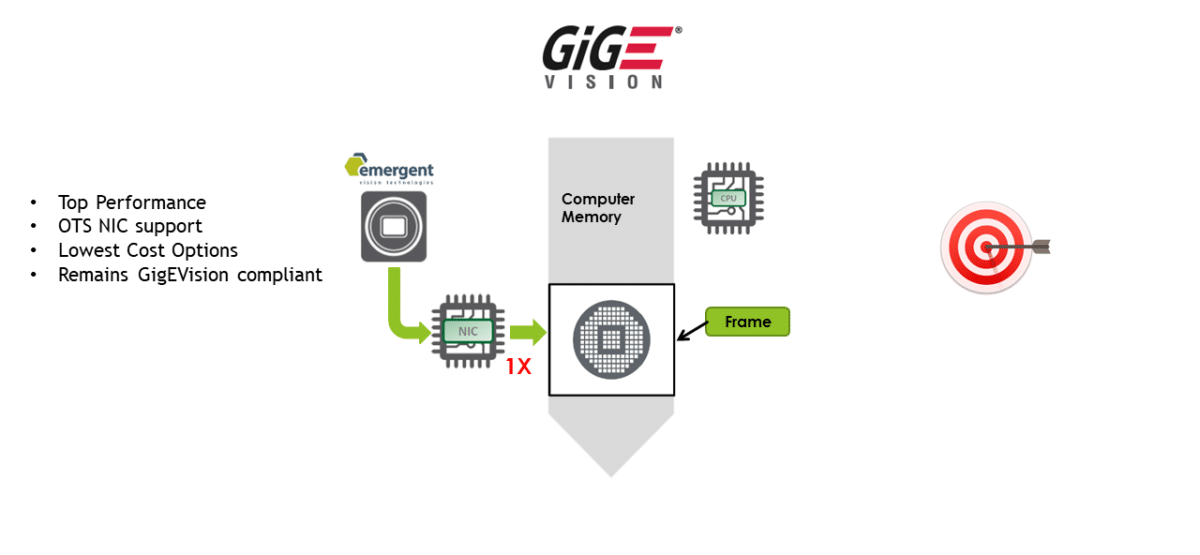

Optimized GVSP uses header splitting in hardware available in OTS perfomance NICs and other processing devices. This is the same method used in SMPTE 2110 in the massive M&E markets which also has 0 tolerance for data loss. In this market, they rely on well designed receivers and as such the OTS NICs provide header splitting technologies which are used in streaming implementations like SMPTE 2110 but also in message and connected protocols like RDMA/RoCE. We work with the same vendors who support RDMA/RoCE to use header splitting to achieve the most feature rich and highest performance receiver while adhering to the current and highly mature GigEVision specification.

Figure (top): Data path in an optimized implementation of GigEVsion.

Figure (bottom): Partners of Emergent Vision Technologies.

GigEVision + TCP (Attempt #1)

- GigEVision standard addition proposed

- *Fully proprietary* until ratified (maybe in 2yrs)

- TCP based protocol

- Overhead makes lower performance than UDP

- Not a zero-copy technology (3x mem bw)

- Use Resends/Flow Control as crutch

- Not a streaming protocol (like USB)

- Strictly point to point (like USB/CXP)

- No Multicast support (like USB/CXP)

- No GPUDirect support

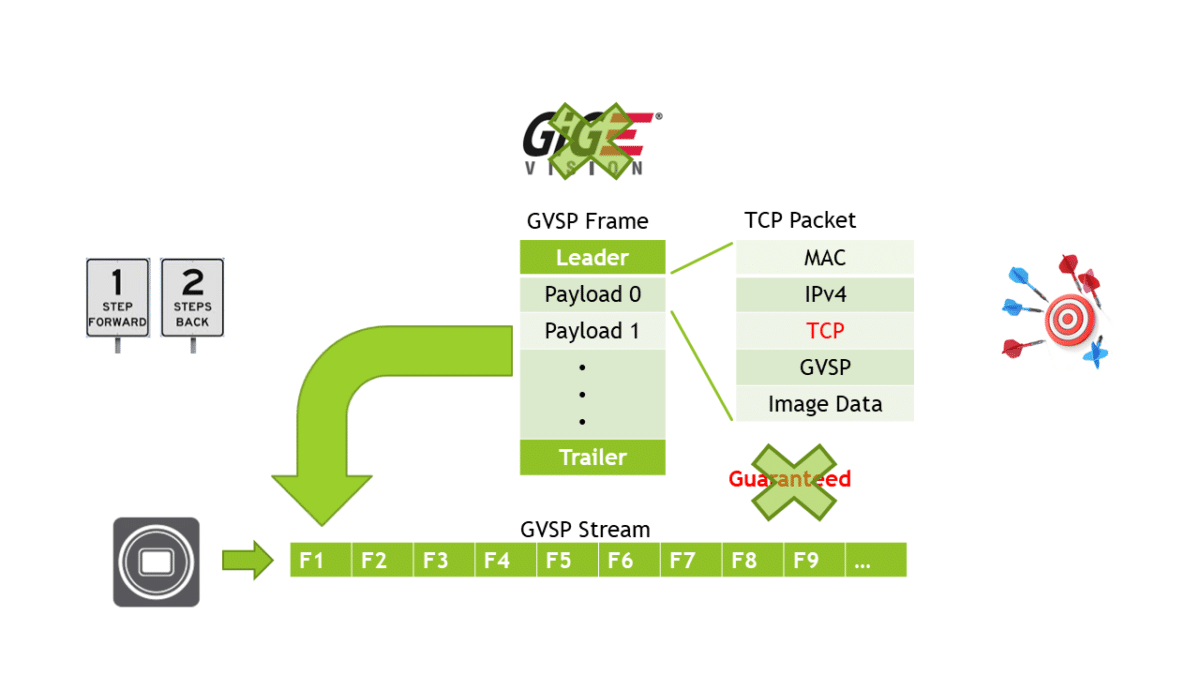

Some vendors have trouble with header splitting technologies either in implementing or in achieving performance so they look for other solutions. Some also try to rely on very low cost NICs or even low performance motherboard resident chips which is dangerous for applications like wafer processing which incurs a high penalty if problems are seen. One proposal is to use TCP. This connected protocol offers limited advantages over UDP. TCP offers resends and flow control for more reliable transfer but this impacts performance as well as induces latency and jitter. TCP still requires data copies and since it is a connected protocol it has overhead which offsets the advantage of reliable transfer as cameras are added to the system. This may be ok for a lower performance system but running many GigE cameras on a single system will hardly provide guaranteed performance. A properly designed and margined system based on UDP even without hardware header splitting will do just as well. Performance is never guaranteed unless a system is well designed and margined.

CXP does not use resends and flow control and has optimal receiver performance, latency and jitter. So why does GigE Vision need this:

One reason is insufficient physical buffering on some NIC cards – cannot cope with OS fluctuations. Another is poor BER performance.

Good news is that quality NICs have ample physical buffering like CXP to deal with this. BER, frankly, is only an issue in very poorly designed low cost product.

But, reality is that a properly tuned server including BIOS changes in concert with properly written receiver code is important in all cases.

Figure: GigE Vision + TCP implementation is 1 step forward and 2 steps back.

GigE Vision + RDMA/RoCE (Attempt #2)

- GigEVision standard addition proposed

- *Fully proprietary* until ratified (maybe in 2yrs)

- RDMA based protocol supported by OTS NICs

- Overhead makes lower performance than UDP

- Zero-copy technology

- Use Resends/Flow Control as crutch

- Not a streaming protocol (like USB/CXP)

- Strictly point to point (like USB/CXP)

- No Multicast support (like USB/CXP)

- No NVIDIA GPUDirect support on Windows

RDMA Cameras: Zero-Copy Transfer With More Overhead

Like with UDP, some vendors are looking to RDMA to avoid a header splitting implementation. These are the same vendors who have trouble working with more than 2 10GigE cameras in a system and, frankly, have trouble with multi-camera systems based on 1GigE.

RDMA achieves 0 copy transfer to the image buffer – good. Like TCP, RDMA is a connected technology so it incurs overhead and will limit scalability. Performance is never guaranteed unless a system is well designed and margined.

RDMA offers resends and flow control for more reliable transfer but this, like TCP, impacts performance as well as induces latency and jitter.

RDMA, like TCP, also cannot support a fundamental networking technology like multicasting.

RDMA is basically a zero copy TCP or even USB.

Both RDMA and TCP are point to point technologies so one might ask why use GigEVision instead of CXP or USB especially given the much improved cost/performance ratios for fpgas as used in CXP frame grabbers and Emergent’s own cards. I remind that with the same NICs used for RDMA we can also leverage the header splitting feature of the card to keep the GigEVision standard intact for limitless integration.

Another important reality is that flow control and resends for TCP and RDMA work with the use of buffering in the camera. Regardless of where the it is – be it in the camera or the card – buffering is the fundamental requirement for reliable transfer. Further, in any design, be it using UDP, TCP, RDMA, and even CXP, if these protective buffers overflow then you lose data.

Figure: GigE Vision + RDMA/RoCE implementation is 1 step forward and 2 steps back.

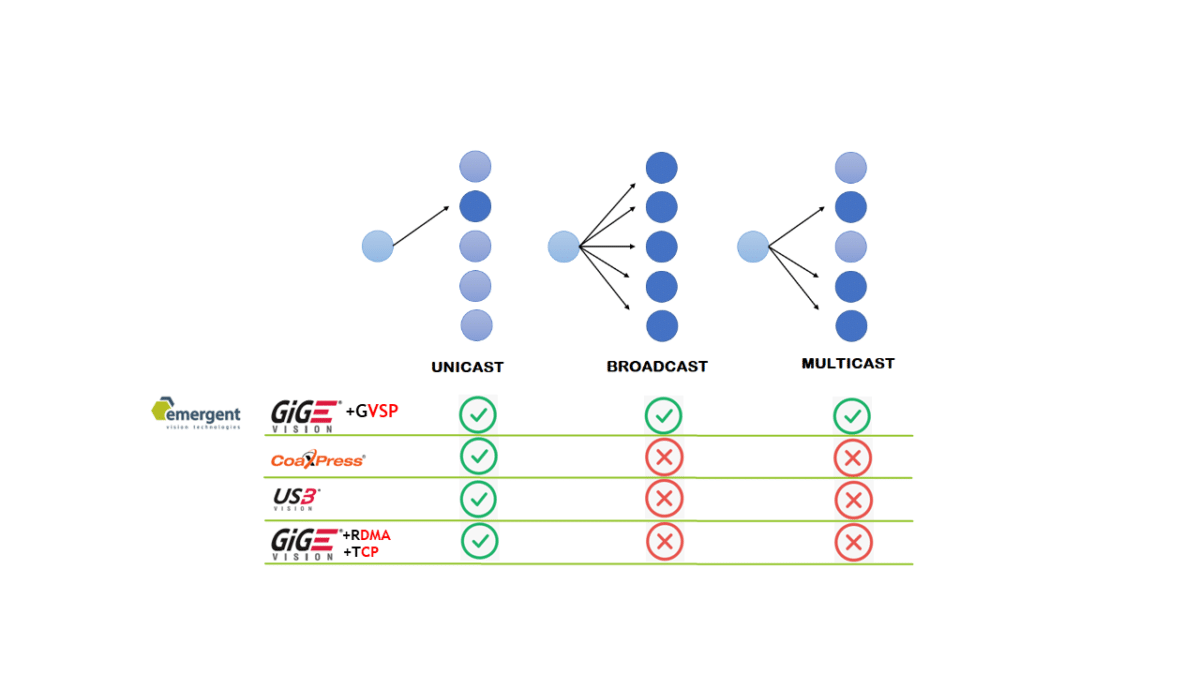

Multicasting

- Connected Technologies Do Not support broadcast/multicast apps

- Eliminates efficient redundancy

- Eliminates distributed processing

- Eliminates a major fundamental in networking

- Available NOW!

This slide highlights the point about multicast technologies. GigEVision+GVSP is currently the only protocol which supports this fundamental networking feature. Other standards will be quickly dismissed in applications requiring efficient redundancy and distributed processing.

Figure: Only GigEVision + GVSP supports broadcasting and multicasting.

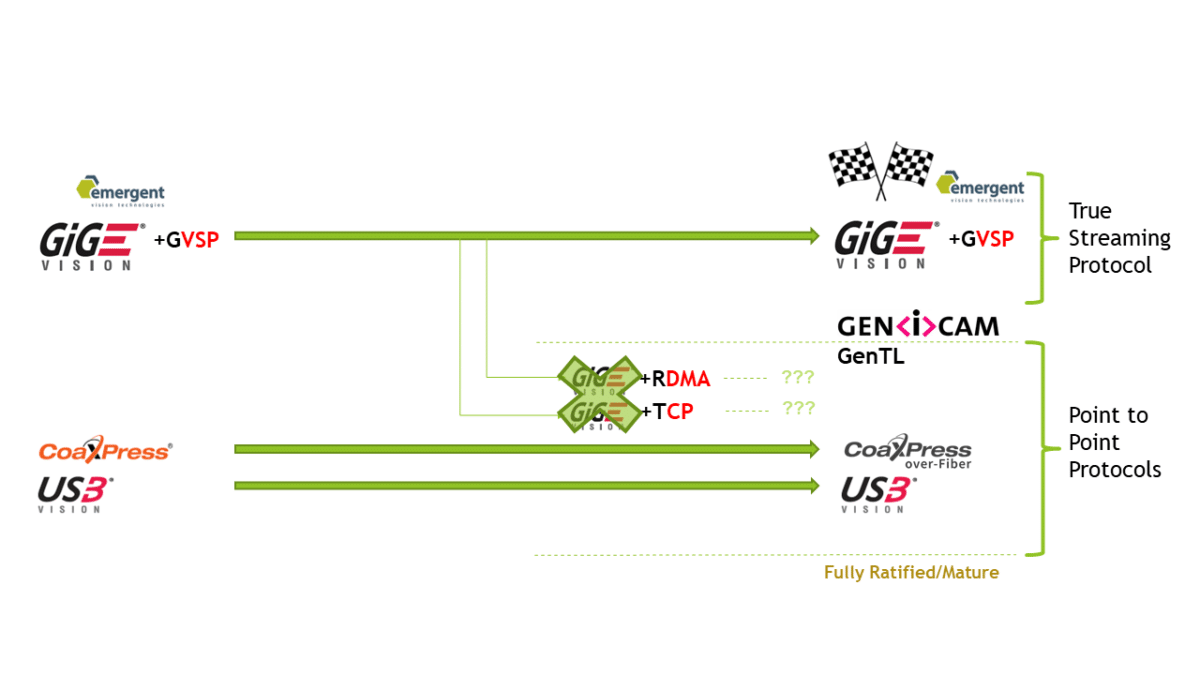

Convergence of the Interfaces

This slide is an illustration of how the proposed or ratified changes are converging the interface standards. USB remains mostly the same but is a point to point technology. CXP has adopted the Ethernet physical layer converging towards GigEVision. GigEVision+RDMA and GigEVision+TCP (if and when ratified) is converging to CXP and USB as a point to point technology. (perhaps 2 years out). GigEVision+GVSP will maintain its integrity and feature set and not converge with the other protocols.

Figure: Only GigEVision + GVSP stands out as the true streaming protocol.

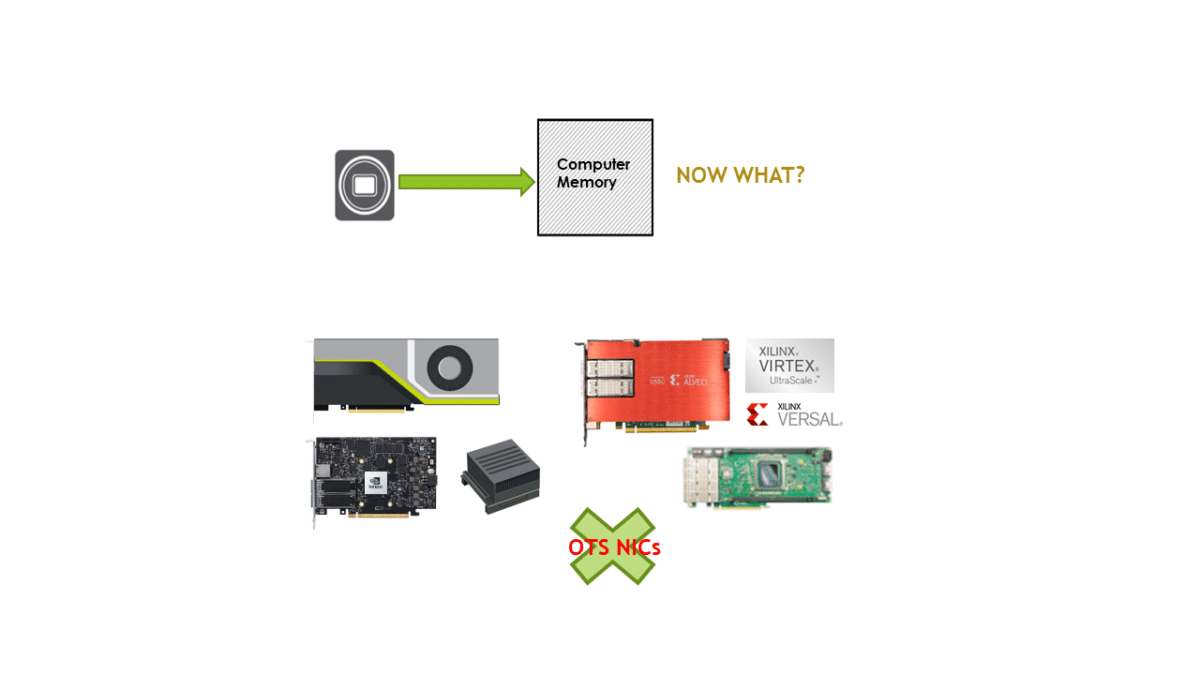

Processing Technologies

- GPU Cards – all processing done on card

- FPGA Cards – all processing done on card

- GPUDirect – bypass system memory to GPU

- Peer to Peer Transfers – move data node to node

- AI Engines – features of GPUs and FPGA cards

- NIC market converging with HPC

So, let’s say we now have our data safe in system memory by whatever means. Now what do we do with it? We touched on this idea above and it seems to be not even part of the discussion of those working to modify the GigE Vision standard to add RDMA or TCP. For some high-speed vision applications, the CPU and system memory are a sufficient resource. For other performance applications using multiple 100GigE, 25GigE or even 10GigE cameras, real-time processing requires offloading the task to more well-suited processing nodes. CPUs and their system memory often cannot cope. Does RDMA and TCP matter now? Answer is no as the cards can equally process GVSP. Let’s look at some examples to understand why.

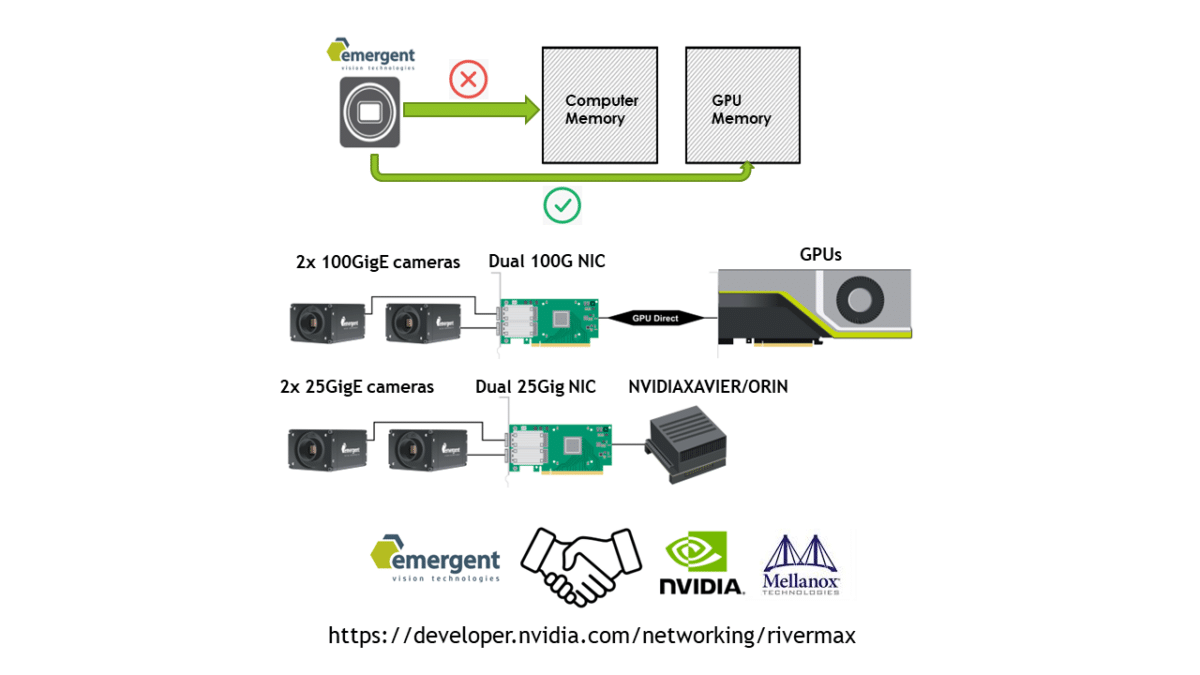

Figure: Off-the-shelf NICs cannot process pixel data and simply pass the pixel data through to the system.

GPUDirect

- 0 CPU and 0 System Memory bandwidth

- NVIDIA product requires Rivermax for Windows

- NVIDIA requires partnership – select few

- Linux is open for GPUDirect on std GPUs

- 80% MV application on Windows

- Some apps include AOI, drone, VR, sports

- Lowers PC requirements

- Peer to peer support

- Available NOW!

GPUDirect is a fantastic technology and in use by many of our customers in AOI, drone, VR and sports applications to name a few. In this case, the CPU and system memory remain untouched while the data is transferred directly to the GPU from the NIC. On Linux, many things like this are possible with many GPUs with arbitrary NICs. NVidia GPUs on Windows only allow GPUDirect from Mellanox NICs using Rivermax for select partner vendors like Emergent. RDMA does not support GPUDirect here. With 80% of machine vision applications being on Windows it does limit others looking to implement this feature. Emergent has supported this feature for over 2 years.

Figure: GPUDirect passes pixel data straight to the GPU bypassing CPU and system memory.

Video: GPU Direct + HZ-65000G 100GigE demo.

Video: NVidia Xavier + HZ-21000G 100GigE.

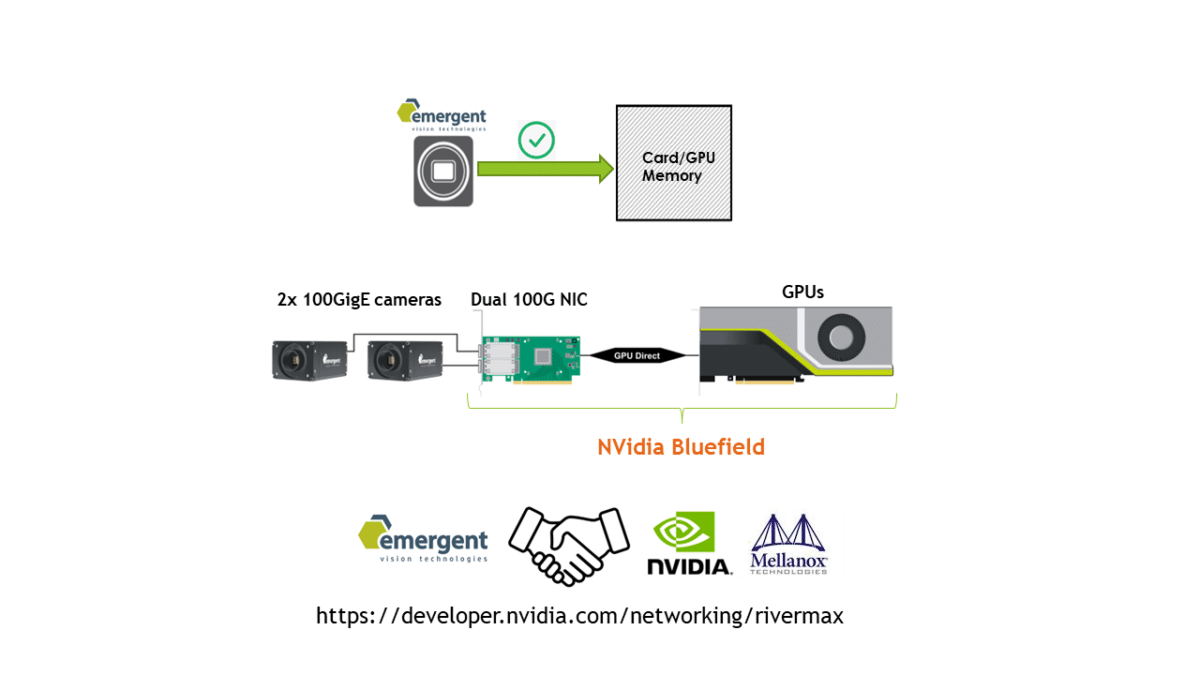

Nvidia Bluefield

- 0 CPU and 0 System Memory bandwidth

- Single PCIe slot required

- CPU not involved at all

- NVidia product requires Rivermax on Windows

- NVidia requires partnership

- 80% MV application on Windows

- Lowers PC requirements

- Peer to peer support

- Future integrated models for HPC coming

- Available NOW!

Similar to the previous slide, NVidia has merged NICs with processing resources to enable a single slot solution. Future models are expected as NVidia focuses on HPC. As an NVidia partner, Emergent has access to all of these technologies. These technologies are also not limited to a single video stream but can handle multiple streams limited only by the resources of the device.

Figure: Nvidia Bluefield technology offers NICs with built-in processing resources.

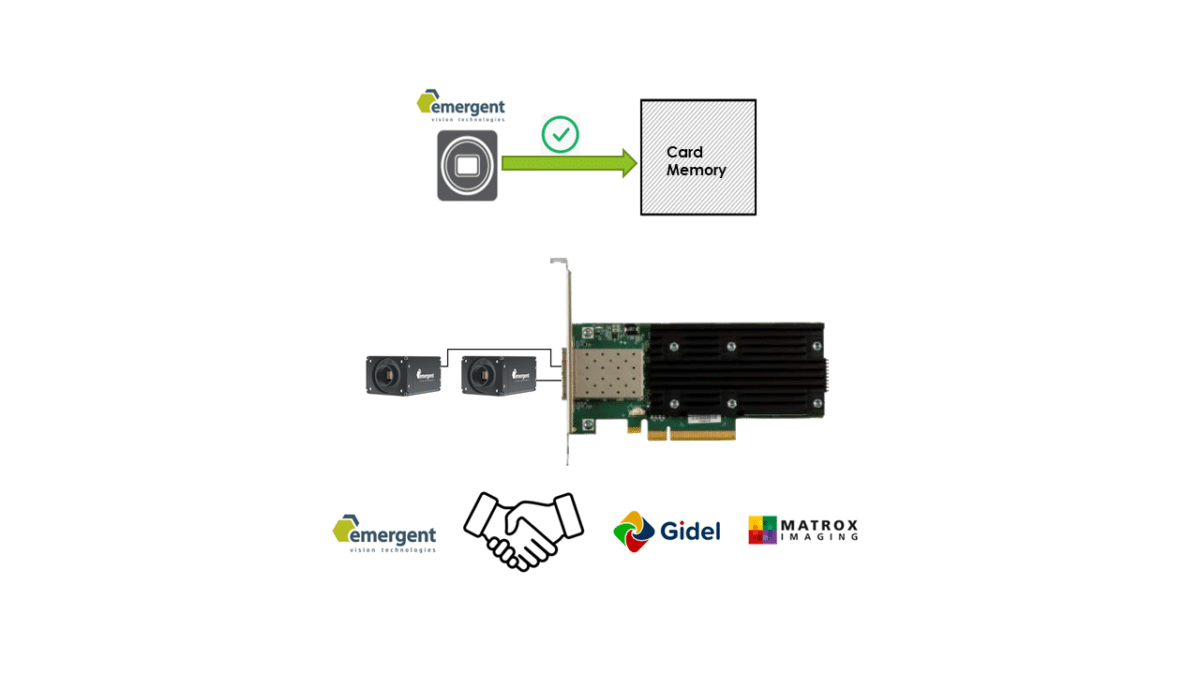

OTS FPGA Cards w/GVSP

- 0 CPU and 0 System Memory bandwidth

- CPU not involved at all

- OTS FPGA Cards with native Emergent provided GVSP core support or with OTS GVSP cores from Xilinx, etc.

- MV algorithms in abundance

- Windows and Linux support

- Lowers PC requirements

- Peer to peer support

- Available NOW!

Within the Machine Vision space, we look to companies like Matrox and Gidel who offer FPGA processing cards with GigEVision+GVSP front ends to allow

seemless integration with Emergent cameras. GPUs have their place as processing nodes but often FPGA cards can handle the workload more efficiently. Customers can leverage vendor IP for quicker time to market. These technologies are also not limited to a single video stream but can handle multiple streams limited only by the device resources.

Figure: FPGA processing cards with GigEVision+GVSP front ends.

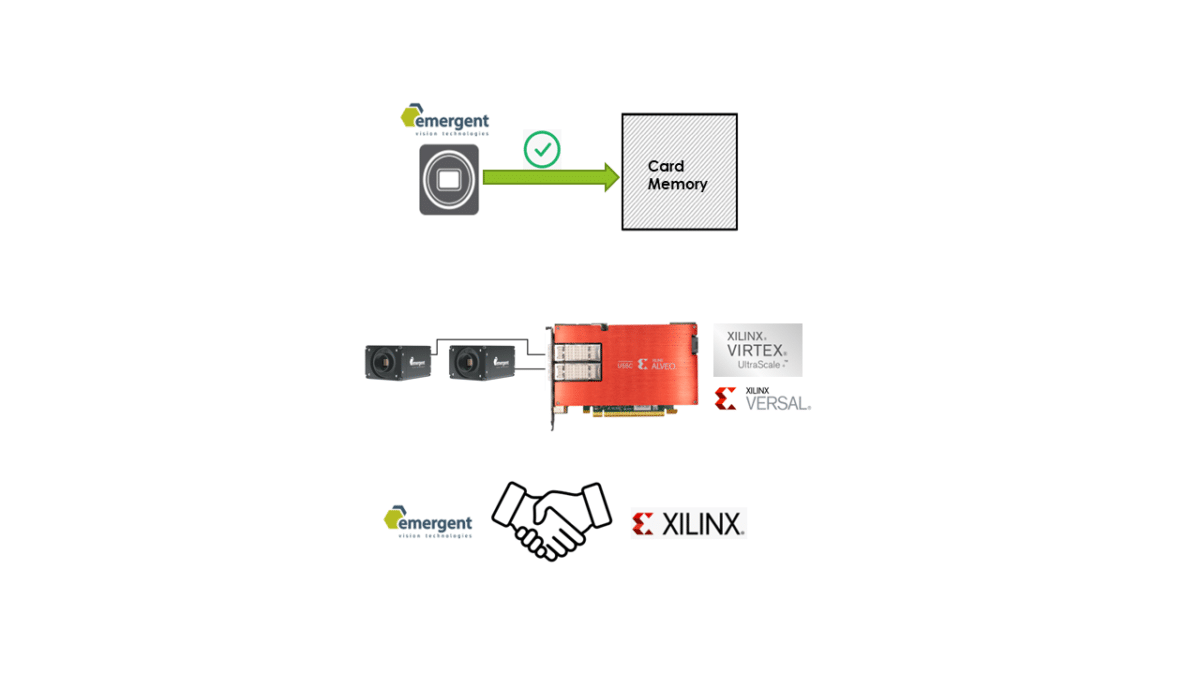

OTS FPGA Cards

- 0 CPU and 0 System Memory bandwidth

- CPU not involved at all

- OTS FPGA Cards with native Emergent provided GVSP core support or with OTS GVSP cores from Xilinx, etc.

- MV algorithms in abundance

- Windows and Linux support

- Lowers PC requirements

- Peer to peer support

- Available NOW!

One of the nice things about Ethernet is the vast cross-industry resource pool we can draw from. Xilinx is one such vendor we work closely with to provide advanced processing resources. To integrate with Emergent cameras, a customer could take their current GigEVision core and port this to one of many cards like Xilinx Alveo which already has the same interface as our cameras. For those new to GigEVision drivers, we can provide ported firmware and drivers for cards like these to get you up and running quickly and let you focus on the particulars of your application. With a quick search, you will become aware of the abundance of FPGA code resources at your disposal. These technologies are also not limited to a single video stream but can handle multiple streams limited only by the device resources.

Figure: Emergent cameras seamlessly integrate with Xilinx Alveo.

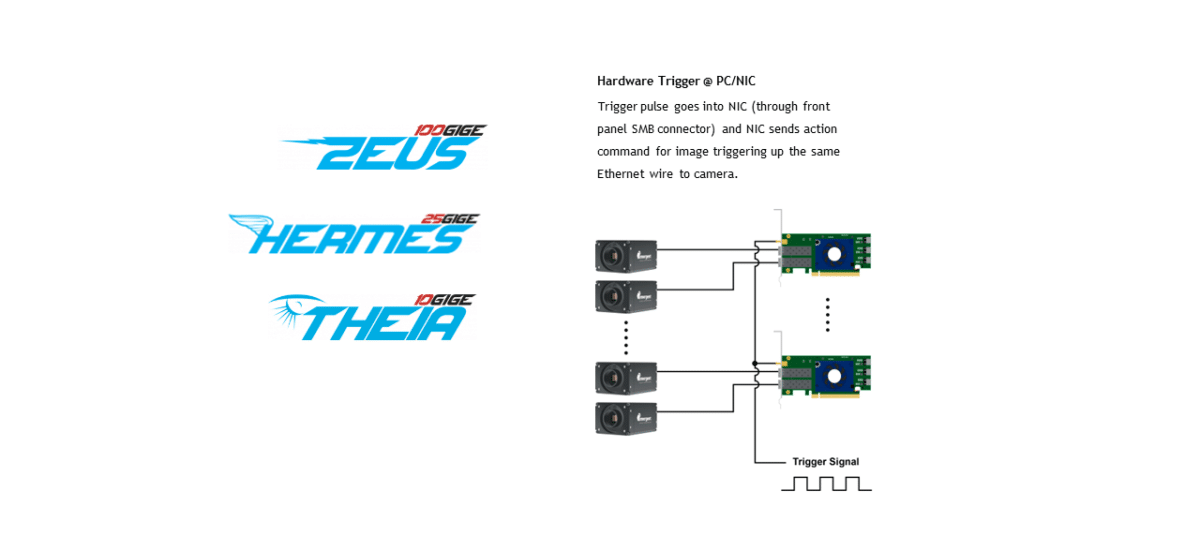

Emergent Cards

- GVSP support

- Windows and Linux support

- Lowers PC requirements

- GPUDirect support

- Peer to peer support

- Front port trigger

- Full supply chain control

- Intelligent image routing

- First in series of smart NICs for MV

- Available NOW!

Emergent begins its foray into the PCIe card space which provides certain benefits to our customers like intelligent image reordering, routing,

and expanded buffers. In addition, we have customers that wish to avoid switches in their setups with cameras spaced far apart and at distances suitable for fiber. Yet, they still want tight synchronization. Our front port trigger with action command for image triggering satisfies this need. Developing our own cards also allows us to manage the full supply chain for our typical customer applications as well as maintain strict quality control. Emergent will also look to develop advanced processing cards to fulfill the needs of our customers as well as application specific modules to reduce time to market. These technologies are also not limited to a single video stream but can handle multiple streams limited only by the device resources.

Figure: Emergent’s own PCIe cards enable management of the full supply chain for data delivery.

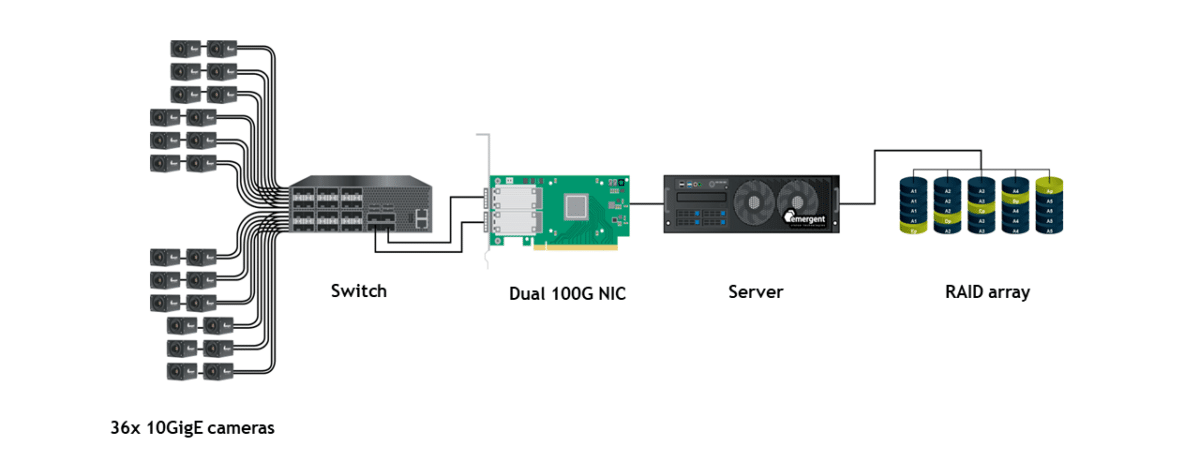

36 camera system

- Highest performance single PC solution

- Windows and Linux support

- Lowest cost multi-camera setup

- Turnkey eCapture software support

- Customize with GPU or FPGA processing nodes

- Easily scalable to multiple servers and processing nodes

- 0 data loss

- Available NOW!

We have presented this setup during a few online presentations as well as at trade shows like NAB Vegas and also at Vision Show in Stuttgart this past month. The system is by far the highest performance and highest density solution in the market. The system has 0 data loss taking in 210 Gbps of image data and storing it to 8 x U.2 NVMe drives. The server is a single mid range AMD and Asus server configuration that runs our eCapture Pro performance software. Some customers wish to take this setup and add GPUs in the available slots to perform real time processing.

We have customers who have scaled systems up to 250+ cameras in a single system using our 25GigE cameras – this exemplifies the ease of scalability.

As mentioned, we have customers that wish to avoid switches in their setups. Switches can be more costly starting at about $7,000 for a 48port/25G+8port/100G configuration through our partner network but help to reduce the overall system cost substantially. Smaller configurations like 18port/25G+4port/100G are available as well. The switch market is also getting more competitive as more companies come to market with 25G/100G and PTP support. You can count on Emergent for support in switch supply and configuration.

Figure: Anatomy of a 36 x 10GigE camera system.

Video: Demo of 36 x 10GigE camera system.

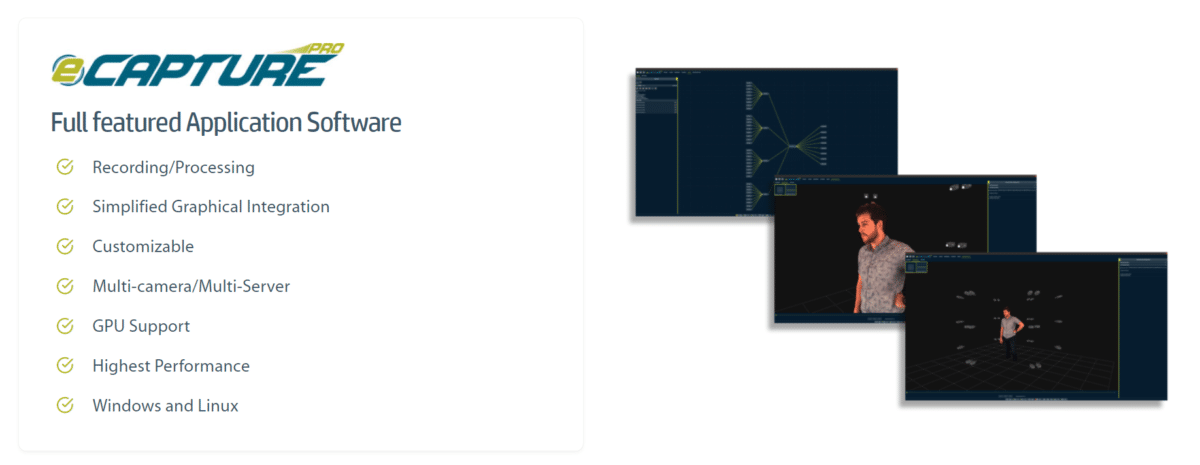

eCapture Pro

eCapture Pro is built on top of Emergent’s eSDK and is the glue that allows us to achieve the highest performance in the market. Processing node technologies are being added and supported for custom performance deployable systems.

Available NOW!

Figure: eCapture Pro, Emergent’s full featured application software.

FAQ

Will Emergent adopt GigEVision+TCP or GigEVision+RDMA?

Currently, only a few smaller companies are actively involved in working on the standard while the biggest players in the market are sitting on the sidelines. Some are actually trying to use this at an early stage as marketing noise to state some advantage over existing solutions. It is expected that industry adoption will be very slow as most things within the Machine Vision market. A fully ratified standard could be 2 years out so we certainly have time to adapt and for Emergent this would be very quick if needed through firmware upgrades to any of our existing fully certified product. Fact is that general header splitting is the highest performance and most flexible method of offloading. An example of flexibility is that the same method can be used for SMPTE 2110 protocol – implementations for GigEVision using RDMA and TCP are boxing themselves into a corner. One thing we will not do is promote and sell cameras using RDMA or TCP alongside the GigEVision logo until the additions are ratified as this can be very misleading.

Do customers care which of GigEVision+TCP, GigEVision+RDMA or even GigEVision+GVSP is used?

Currently, no Emergent customers are interested in TCP or RDMA.

Here are the things to consider for your high speed application:

- Commitment – Is the vendor committed to high speed or is the vendor spread too thin due to lack of focus?

- Performance – Is performance achieved by whatever means?

- Flexibility – Does the vendor entertain customization options?

- Support – How quick is the turnaround on your support request and is vendor there with you from start to finish at all levels of the application?

- Price – Is SYSTEM LEVEL price/performance ratio acceptable? You must compare the pricing of complete solution.

- Supply chain – How soon can I get my product now and years from now?

- Experience – How mature is this product and do not put too much faith in one vendors claims? Experience matters as does due diligence.

Consider all these points and make your decision.

What causes convergence in the standards?

Convergence happens in industries where standardization is not as strong. It can highlight problems with the standard. CXP, for example, was not forward looking enough and realized that high speed and longer cable lengths was not practically achievable with Coax cable – so CXP adopted the Ethernet physical layer. Other times, like with GigEVision+GVSP the problem is not with the standard but rather in the implementations so some look to change the standard sometimes in a very hurried manner and sometimes to achieve a marketing “me first” status.

Would it be more likely buffers overflow with GigEVision+RDMA vs GigEVision+GVSP?

As stated, the overhead of GigEVision+RDMA will be higher than GigEVision+GVSP and this can result in buffer overflow sooner on a GigEVision+RDMA system. It should be noted that the less this buffer is used the lower the latency and jitter the system will exhibit. You may have greater reliability with a bigger buffer but the more those images back up in the buffers, the lower the performance and the higher latency and jitter will be. So, a reminder that a properly tuned server including BIOS changes in concert with performance receiver drivers is important in all cases to limit buffer back up to maximize performance.

How important is multicasting to customers?

The main two considerations in multicasting are distributed processing and system redundancy. Some systems can tolerate downtime, in which case, system redundancy can be handled by simply manually swapping out questionable components or waiting for a system to reboot before coming back on-line. Multicasting allows the fastest failover implementation.

Some systems have low processing requirements and that processing can be handled on a single CPU processing node. Multicasting allows the same image data to be sent to multiple processing nodes at the exact same time for real-time parallel processing.

About Emergent Vision Technologies

Here is a recap of what Emergent is all about…

- 10+ Awards for innovation and pioneering the high speed GigEVision imaging movement

- 10+ years shipping 10GigE cameras with more than 140 models

- 5+ years shipping 25GigE cameras with more than 55 models

- 2+ years shipping 100GigE cameras with more than 16 models

- Camera technology performance leader

- Focused on high-speed Ethernet/GigEVision

- Focused on enabling the processing of high-speed image data

- Area scan and Line scan models

- UV, NIR, Polarized, Color, Mono models for multispectral applications

- Emergent eSDK for full application flexibility

- Emergent eCapture Pro for a highly comprehensive software solution

- Most comprehensive range of product and support for high-speed imaging applications

- Any speed, any resolution, any cable length

- Available NOW!

We are a multi-award winning company with a focus on high speed GigEVision product.

We have many years shipping product ranging in speeds from 10GigE up to 100GigE.

We have a strong focus on providing end-to-end technologies and support for our customers applications.

We can fullfil most application needs.

Lastly, products presented are available now.

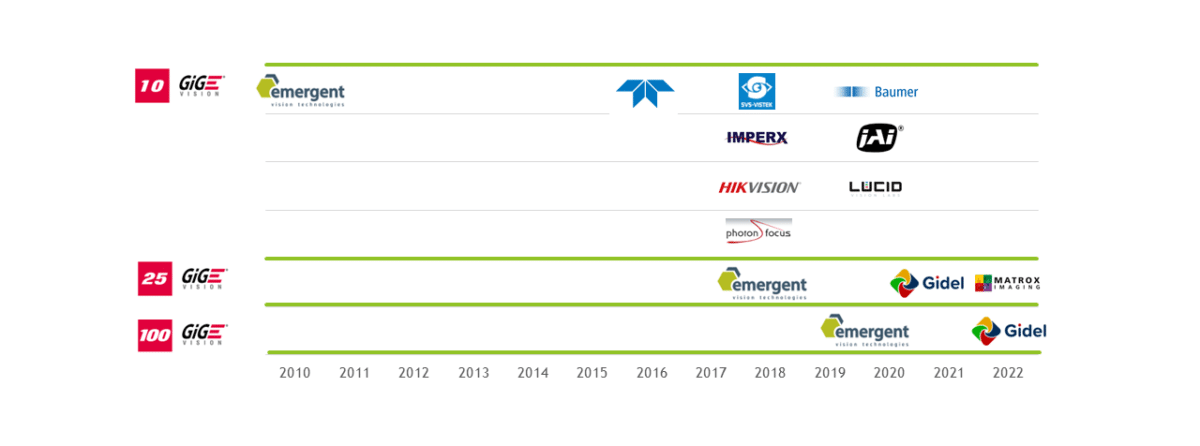

Adoption of 10GigEVision and Higher

Here is a quick snapshot of the adoption of GigEVision products ranging in speeds from 10GigE up to 100GigE. Emergent has shown how top performance can be achieved and opened up many markets including machine vision to the use of such technologies. Some companies are just now leveraging our efforts toward releasing 25G and higher speed products but still a ways to go to release ratified and performance products.

Figure: Emergent Vision Technologies is the first provider of cameras based on 10GigE, 25GigE, 50GigE, and 100GigE interfaces.