Learn Why UDP Represents the Optimized GigE Vision Approach Over RDMA and TCP

Advancements in image sensor technologies have been continuous, offering higher resolutions and faster speeds, which in turn create new possibilities for machine vision and imaging. However, these advancements also bring challenges, particularly in reliable data transmission. One of the key challenges is the need to transmit data over long distances while maintaining low latency and controlling jitter. Overcoming these challenges is crucial for achieving success in demanding imaging applications.

To address these challenges, many manufacturers have made significant investments in high-performance data transmission using Ethernet, a scalable technology that forms the foundation for GigE Vision, the leading camera interface technology in the machine vision industry. GigE Vision relies on readily available cabling, switches, and network interface cards (NICs). Furthermore, it enjoys support from major computer operating systems such as Windows, Linux, and others.

Get the Most Out of GigE Vision

The Association for Advancing Automation (A3) officially approved the GigE Vision standard in 2006. It depends on the User Datagram Protocol (UDP) over Ethernet to enable reliable and low-latency data delivery. However, as data rates have increased, some manufacturers have encountered challenges in achieving optimal performance with GigE Vision, particularly when data rates reach 10Gbps or higher. Alternative protocols such as Transmission Control Protocol (TCP) or remote direct memory access (RDMA) and RDMA over Converged Ethernet (RoCE) have been explored to address these difficulties.

Under the GigE Vision standard, data transmission occurs using the GigE Vision Streaming Protocol (GVSP) over UDP. Each frame consists of a leader packet, multiple image (payload) packets, and a trailer packet. The high-speed GigE camera transmits these packets, while the receiver (the PC) is responsible for placing the data in the appropriate destination buffer(s). This unconnected protocol approach eliminates unnecessary network overhead, resulting in optimal network performance. Since UDP does not guarantee data delivery, it is crucial for the receiver to be properly designed and configured to prevent data or packet loss. However, when configured correctly, this setup ensures maximum performance, minimal latency, and reduced jitter.

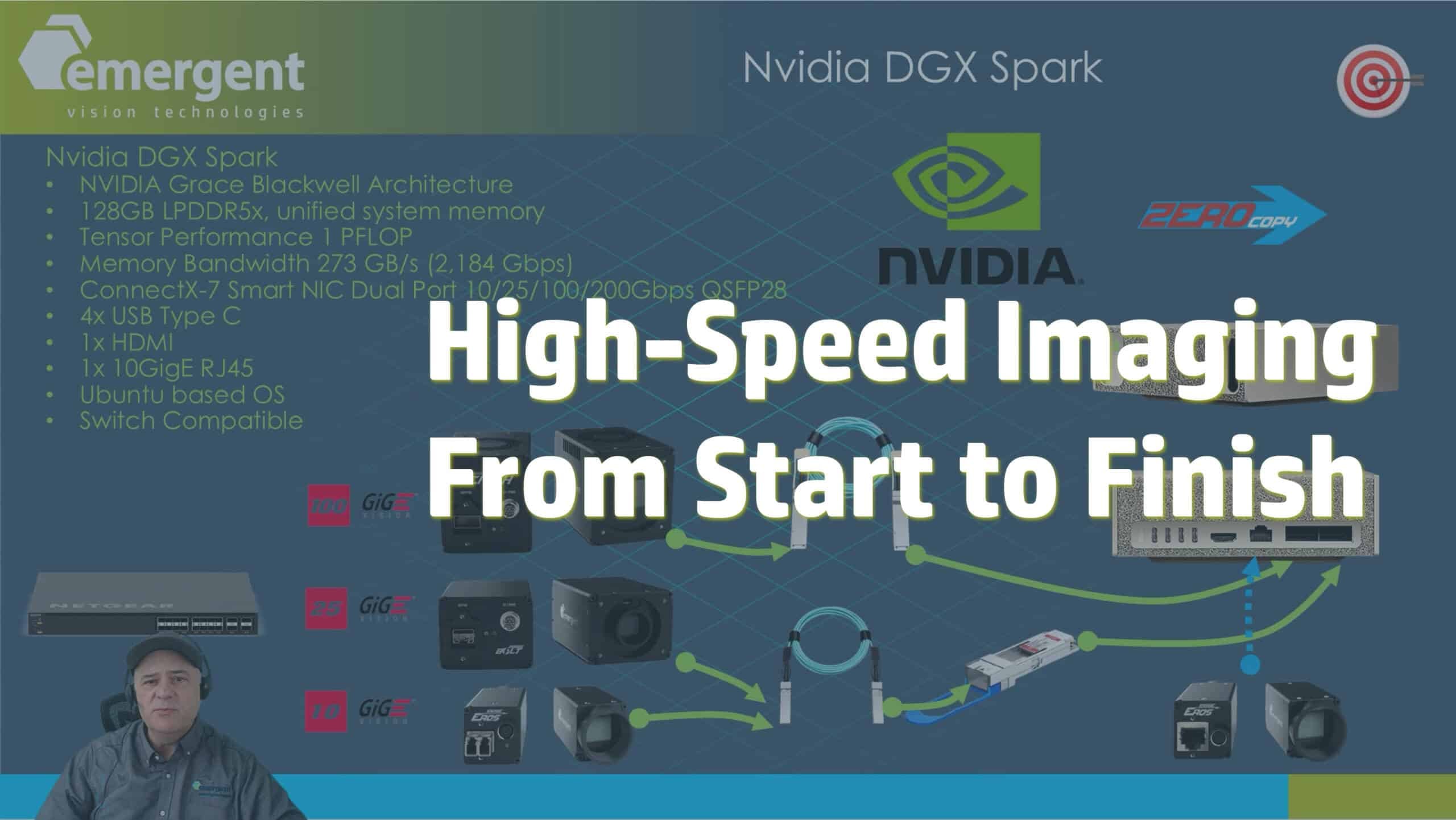

picture1

Figure 1: The data path of a conventional GigE Vision + GVSP implementation is not optimized for performance when data rates approach 10Gbps or higher.

However, not all implementations offer the same level of performance. Some manufacturers employ a GigE Vision implementation that relies on software-based header splitting to remove the headers from GVSP packets and store the image data in a continuous memory buffer. Although technically compliant, this approach significantly impacts performance by tripling CPU usage and memory consumption. Such a poor design choice for the receiver introduces inefficiencies that greatly affect system costs and performance, often limiting the capabilities of 1GigE and 10GigE devices and making it impossible to achieve speeds of 25GigE or 100GigE. The technical difficulties associated with implementing an optimized GigE Vision solution, including an optimized receiver, have prompted some manufacturers to propose alternative methods for complex imaging techniques that do not rely on Ethernet.

Comparing UDP, TCP, RDMA, and RoCE for GigE Vision

One suggested addition to the GigE Vision standard is the inclusion of TCP, which some argue could alleviate the need to design and manage header splitting. While adopting a TCP approach might simplify the design process, its performance benefits over UDP are limited. Although TCP does not function as a streaming protocol, it does provide features such as data resend and flow control, ensuring reliable data transmission. However, these advantages come at the expense of overall system performance. As a connected protocol, TCP introduces additional overhead, including increased memory usage. Furthermore, TCP relies on data copies, negating the advantages of a zero-copy design. Additionally, as a point-to-point technology, TCP eliminates traditional GigE Vision benefits such as multicasting or point-to-multipoint transmission. It is worth noting that TCP-based implementations will remain proprietary until they are ratified, if and when that occurs.

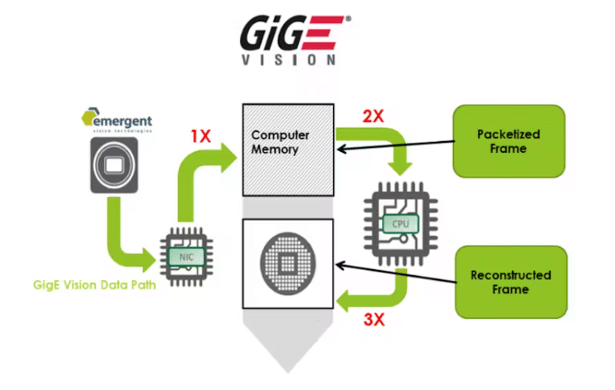

picture2

Figure 2: Data path in an optimized UDP implementation of GigE Vision achieves low-latency and reliable data delivery over Ethernet, even at speeds of 10, 25, and 100Gbps.

Another proposal that has emerged recently is RDMA and RoCE. Similar to UDP, RDMA and RoCE offer zero-copy performance to the image buffer and do not require header splitting. However, like TCP, RDMA and RoCE are connected protocols that support resends and flow control, which introduce overhead to the system and impact overall performance, latency, and jitter. Additionally, RDMA and RoCE share the same limitations as point-to-point technologies. Similar to the zero-copy TCP protocol, RDMA and RoCE will remain proprietary until they are ratified.

When implemented correctly, an optimized UDP approach for GigE Vision remains the best choice for achieving low-latency and reliable data delivery over Ethernet, even at speeds of 10, 25, and 100Gbps. Emergent Vision Technologies has a proven track record in this regard, with over 10 years of experience in shipping 10GigE cameras, over 5 years of experience in shipping 25GigE cameras, and over 2 years of experience in shipping 100GigE cameras, all without any data loss.

For a more comprehensive understanding of the true advantages of an optimized GigE Vision approach using UDP, TCP, and RDMA for GigE Vision cameras, we invite you to explore our in-depth guide. If you have any questions, comments, or would like to speak with one of our technical experts, please don’t hesitate to contact us today.